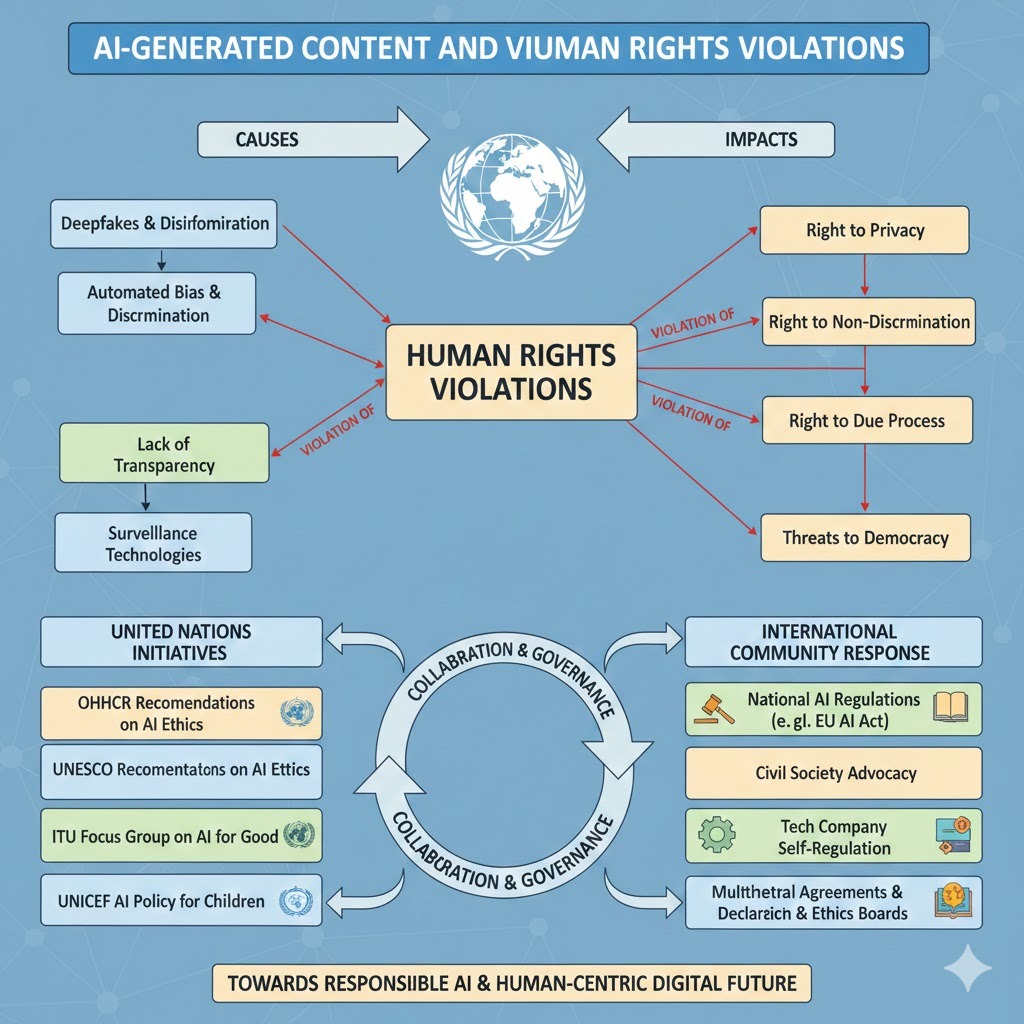

AI-Generated Content and Violation of Human Rights: United Nations Initiatives and International Community Response

DOI:

https://doi.org/10.64060/ICPP.11Keywords:

AI-Generated Content, Violation of Human Rights, International Community, Human RightsAbstract

While technologies offer significant social and economic benefits, they have also new forms of harm, including non-consensual deepfakes, synthetic child sexual abuse material, and large-scale disinformation. These practices pose direct threats to internationally protected human rights, particularly the rights to privacy, dignity, equality, freedom of expression, and participation in public life. The article demonstrates that current international and domestic responses struggle to address challenges related to attribution of responsibility, enforcement, and victim protection in cases involving synthetic media. It concludes that a more targeted, human-rights-based approach to AI-generated content is required, one that moves beyond general ethical principles and provides clear legal guidance, shared responsibility among key actors, and meaningful remedies for affected individuals. This article argues that although the United Nations has increasingly acknowledged the human rights risks associated with artificial intelligence, its existing governance frameworks remain largely abstract and insufficiently tailored to the specific harms caused by AI-generated content. The absence of precise legal standards and enforceable obligations has contributed to fragmented national responses, inconsistent platform accountability, and limited access to effective remedies for victims. Using a doctrinal and policy-analysis approach, the study examines relevant UN initiatives alongside comparative regulatory practices in the United States, European Union, United Kingdom, China, Russia, and Pakistan.

Downloads

References

[1] J. Afzal, “Development of Legal Framework of Digital Laws,” in Implementation of Digital Law as a Legal Tool in the Current Digital Era, Singapore: Springer Nature Singapore, 2024, pp. 139–154. doi: 10.1007/978-981-97-7106-6_7.

[2] A. O. Mohamed, “The Effect of Simulating Virtual Scenes using Artificial Intelligence Techniques in Producing Various Media Materials,” J. Ecohumanism, vol. 3, no. 8, Nov. 2024, doi: 10.62754/joe.v3i8.4771.

[3] J. Afzal, “Best Practice of Digital Laws and Digital Justice,” in Implementation of Digital Law as a Legal Tool in the Current Digital Era, J. Afzal, Ed., Singapore: Springer Nature Singapore, 2024, pp. 95–120. doi: 10.1007/978-981-97-7106-6_5.

[4] J. Afzal, Contemporary Theory of Digitalization (CToD). 2026. doi: 10.2139/ssrn.6018858.

[5] J. Afzal, Implementation of digital law as a legal tool in the current digital Era. Springer, 2024.

[6] J. Afzal, Exploring the New Horizons of International Law Concerning Globalization of Economy. Springer Nature.

[7] F. R. Moreno, “Generative AI and deepfakes: a human rights approach to tackling harmful content,” Int. Rev. Law Comput. Technol., Sept. 2024, Accessed: Jan. 20, 2026. [Online]. Available: https://www.tandfonline.com/doi/abs/10.1080/13600869.2024.2324540

[8] K. de Vries, “Let the Robot Speak! AI-Generated Speech and Freedom of Expression,” in YSEC Yearbook of Socio-Economic Constitutions 2021: Triangulating Freedom of Speech, S. Hindelang and A. Moberg, Eds., Cham: Springer International Publishing, 2022, pp. 93–115. doi: 10.1007/16495_2021_38.

[9] D. V. Voinea, “AI AND COPYRIGHT - WHO OWNS AI GENERATED CONTENT?,” July 2023, doi: 10.5281/ZENODO.15252004.

[10] Y. Wang, “Synthetic Realities in the Digital Age: Navigating the Opportunities and Challenges of AI-Generated Content,” Aug. 18, 2023. doi: 10.36227/techrxiv.23968311.v1.

[11] S. Firmino Pinto, “AI Friend? Risks, Implications, and Recommendations on Generative AI for Children,” 2024.

[12] S. Ali et al., “Children as creators, thinkers and citizens in an AI-driven future,” Comput. Educ. Artif. Intell., vol. 2, p. 100040, Jan. 2021, doi: 10.1016/j.caeai.2021.100040.

[13] Z. Li, W. Zhang, H. Zhang, R. Gao, and X. Fang, “Global Digital Compact: A Mechanism for the Governance of Online Discriminatory and Misleading Content Generation,” Int. J. Human–Computer Interact., Jan. 2025, Accessed: Jan. 21, 2026. [Online]. Available: https://www.tandfonline.com/doi/abs/10.1080/10447318.2024.2314350

[14] J. Ahmad and A. Haider, “Firewall Technology Testing in Pakistan: The Fine Line Between National Security and Freedom of Expression,” J. Eng. Sci. Technol. Trends, vol. 2, no. 1, 2025, Accessed: Jan. 21, 2026. [Online]. Available: https://journals.scopua.com/index.php/JESTT/article/view/11

[15] K. Goth, “AI-Generated Content and the Pollution of the Information Sphere: A Freedom of Expression Analysis under Article 10 ECHR,” Master Thesis, 2025. Accessed: Jan. 21, 2026. [Online]. Available: https://studenttheses.uu.nl/handle/20.500.12932/49552

[16] N. Ahmad, A. W. Ali, and M. H. B. Yussof, “The Challenges of Human Rights in The Era of Artificial Intelligence,” UUM J. Leg. Stud. UUMJLS, vol. 16, no. 1, pp. 150–169, Jan. 2025.

[17] R. Q. Idrees, S. Kiyani, and A. Shahid*, “The Role of International Law in Protecting Human Rights Globally,” Law Res. J., vol. 3, no. 2, pp. 71–85, May 2025.

[18] A. Flynn, A. Powell, A. Eaton, and A. J. Scott, “Sexualized Deepfake Abuse: Perpetrator and Victim Perspectives on the Motivations and Forms of Non-Consensually Created and Shared Sexualized Deepfake Imagery”.

[19] T. Yu, Y. Tian, Y. Chen, Y. Huang, Y. Pan, and W. Jang, “How Do Ethical Factors Affect User Trust and Adoption Intentions of AI-Generated Content Tools? Evidence from a Risk-Trust Perspective,” Systems, vol. 13, no. 6, June 2025, doi: 10.3390/systems13060461.

[20] J. Afzal, C. Yongmei, A. Fatima, and A. Noor, “Review of various Aspects of Digital Violence,” J. Eng. Sci. Technol. Trends, vol. 1, no. 2, 2024.

[21] S. Nasiri and A. Hashemzadeh, “The Evolution of Disinformation from Fake News Propaganda to AI-driven Narratives as Deepfake,” J. Cyberspace Stud., vol. 9, no. 1, Jan. 2025, doi: 10.22059/jcss.2025.387249.1119.

[22] J. Afzal, Exploring the New Horizons of International Law Concerning Globalization of Economy. Springer Nature.

[23] A. Machado, A. Pesqueira, J. Rodrigues dos Santos, A. Sacavem, M. Sousa, and F. Teixeira, “ESG and Digital Transformation in Organizations,” 2025, pp. 1–32. doi: 10.1007/978-3-031-86079-9_1.

[24] C. Easttom, “Malicious Use of Artificial Intelligence,” in 2025 IEEE 15th Annual Computing and Communication Workshop and Conference (CCWC), Jan. 2025, pp. 00499–00507. doi: 10.1109/CCWC62904.2025.10903787.

[25] M. Krkić, “Cultural perspectives on AI usage and regulation in deepfake creation: how culture shapes AI practices,” Int. Commun. Chin. Cult., vol. 12, no. 2, pp. 225–237, June 2025, doi: 10.1007/s40636-025-00330-5.

[26] H. Qudah, “Tracing the development of environmental, social and governance (ESG) performs: a systematic review and bibliometric analysis,” Future Bus. J., vol. 12, Jan. 2026, doi: 10.1186/s43093-025-00705-5.

[27] K. Ding, “A Tiered Framework for Copyright Ownership in AI-Generated Content,” INNO-PRESS J. Emerg. Appl. AI, vol. 1, no. 9, Dec. 2025, doi: 10.65563/jeaai.v1i9.52.

[28] P. A. Ganai and I. A. Naikoo, “The Ethical Paradox of AI-Generated Texts: Investigating the Moral Responsibility in Generative Models”.

[29] G. Pandy, A. Murugan, and V. Pugazhenthi, “Generative AI: Transforming the Landscape of Creativity and Automation,” Int. J. Comput. Appl., Jan. 2025, doi: 10.5120/ijca2025924392.

[30] A. Gaidartzi and I. Stamatoudi, “Authorship and Ownership Issues Raised by AI-Generated Works: A Comparative Analysis,” Laws, vol. 14, no. 4, Aug. 2025, doi: 10.3390/laws14040057.

[31] M. Anderljung, J. Hazell, and M. von Knebel, “Protecting society from AI misuse: when are restrictions on capabilities warranted?,” AI Soc., vol. 40, no. 5, pp. 3841–3857, June 2025, doi: 10.1007/s00146-024-02130-8.

[32] A. D. Andriani, “The Future of Digital Content: AI-Generated Texts, Images, Videos, and Real-Time Production,” in Impacts of AI-Generated Content on Brand Reputation, IGI Global Scientific Publishing, 2025, pp. 143–170. doi: 10.4018/979-8-3373-4327-3.ch006.

[33] T. [R-T. Sen. Cruz, “All Info - S.146 - 119th Congress (2025-2026): TAKE IT DOWN Act.” Accessed: Jan. 21, 2026. [Online]. Available: https://www.congress.gov/bill/119th-congress/senate-bill/146/all-info

[34] V. Ugwuoke and M. R. Sanfilippo, “The Current Landscape of Deepfake Legislation in the United States: Analysis of State-Level Responses,” J. Inf. Policy, June 2025, doi: 10.5325/jinfopoli.15.2025.0004.

[35] D. Hartmann, J. R. L. de Pereira, C. Streitbörger, and B. Berendt, “Addressing the regulatory gap: moving towards an EU AI audit ecosystem beyond the AI Act by including civil society,” AI Ethics, vol. 5, no. 4, pp. 3617–3638, Aug. 2025, doi: 10.1007/s43681-024-00595-3.

[36] L. Nannini, E. Bonel, D. Bassi, and M. J. Maggini, “Beyond phase-in: assessing impacts on disinformation of the EU Digital Services Act,” AI Ethics, vol. 5, no. 2, pp. 1241–1269, Apr. 2025, doi: 10.1007/s43681-024-00467-w.

[37] M. Palade-Ropotan, “MEANS OF PROTECTING VICTIMS OF CRIME AT EUROPEAN LEVEL,” Int. J. Leg. Soc. Order, vol. 5, no. 1, pp. 1–15, 2025.

[38] P. Coe, “Tackling online false information in the United Kingdom: The Online Safety Act 2023 and its disconnection from free speech law and theory*,” J. Media Law, vol. 15, no. 2, pp. 213–242, July 2023, doi: 10.1080/17577632.2024.2316360.

[39] S. Law, “Effective enforcement of the Online Safety Act and Digital Services Act: unpacking the compliance and enforcement regimes of the UK and EU’s online safety legislation,” J. Media Law, vol. 16, no. 2, pp. 263–300, July 2024, doi: 10.1080/17577632.2025.2459441.

[40] M. Sheehan, “China’s AI Regulations and How They Get Made,” Ho Ri Zo NS, 2023.

[41] S. Migliorini, “China’s Interim Measures on generative AI: Origin, content and significance,” Comput. Law Secur. Rev., vol. 53, p. 105985, July 2024, doi: 10.1016/j.clsr.2024.105985.

[42] E. Trikoz, E. Gulyaeva, and K. Belyaev, “Russian experience of using digital technologies and legal risks of AI,” E3S Web Conf., vol. 224, p. 03005, 2020, doi: 10.1051/e3sconf/202022403005.

[43] D. Moskwa, “Russia’s battle for remembrance. Memory laws in Vladimir Putin’s Russia exemplified by the Russo-Ukrainian war,” Rocz. Inst. Eur. Środ.-Wschod., vol. 22, no. 1, pp. 105–121, Nov. 2024, doi: 10.36874/RIESW.2024.1.6.

[44] C. Yongmei and J. Afzal, “Impact of enactment of ‘the prevention of electronic crimes act, 2016’as legal support in Pakistan,” Acad. Educ. Soc. Sci. Rev., vol. 3, no. 2, pp. 203–212, 2023.

[45] I. U. Haq and S. M. Zarkoon, “Cyber Stalking: A Critical Analysis of Prevention of Electronic Crimes Act-2016 and Its Effectiveness in Combating Cyber Crimes, A Perspective from Pakistan,” Pak. Multidiscip. J. Arts Sci., pp. 43–62, 2023, doi: 10.5281/zenodo.10450177.

[46] S. Naseer and C. Ashraf, “Gender-Based Violence in Pakistan’s Digital Spaces,” Fem. Leg. Stud., vol. 30, no. 1, pp. 29–50, Apr. 2022, doi: 10.1007/s10691-021-09473-3.

[47] S. Khan, P. M. Tehrani, and M. Iftikhar, “Impact of PECA-2016 Provisions on Freedom of Speech: A Case of Pakistan,” J. Manag. Info, vol. 6, no. 2, pp. 7–11, 2019, doi: 10.31580/jmi.v6i2.566.

[48] M. A. Abbas and M. R. A. Tullah, “Criminalizing Disinformation in Pakistan: A Constitutional Analysis of Section 26-A of PECA and Freedom of Expression,” Pak. J. LAW Anal. WISDOM, vol. 4, no. 12, pp. 67–77, Dec. 2025.

[49] T. Nazakat and F. E. Malik, “Empowering Justice through AI: Addressing Technology-Facilitated Gender-Based Violence with Advanced Solutions,” J. Law Soc. Stud., vol. 7, no. 1, pp. 26–42, Mar. 2025, doi: 10.52279/jlss.07.01.2642.

Downloads

Published

Issue

Section

License

Copyright (c) 2026 Jalil Ahmad (Author)

This work is licensed under a Creative Commons Attribution 4.0 International License.